NVIDIA (NVDA 1.86%) announced on Monday that its A100 graphics processing unit (GPU) -- the chip designed specifically for artificial intelligence (AI), high-performance computing (HPC), and analytics use cases -- just got a significant upgrade. The latest version of the A100 now has double the memory of its predecessor, "providing researchers and engineers unprecedented speed and performance to unlock the next wave of AI and scientific breakthroughs."

The newest edition boasts an 80 GB GPU, twice the 40 GB available in the previous version. The high-speed chip powers NVIDIA's HGX AI supercomputing platform -- a turnkey AI processor in a box. The company touts the A100 as "the world's fastest data center GPU." The chip will also be found at the core of NVIDIA DGX A100 and DGX Station A100 systems, the company's other AI supercomputers, both of which launched today and are expected to ship this quarter.

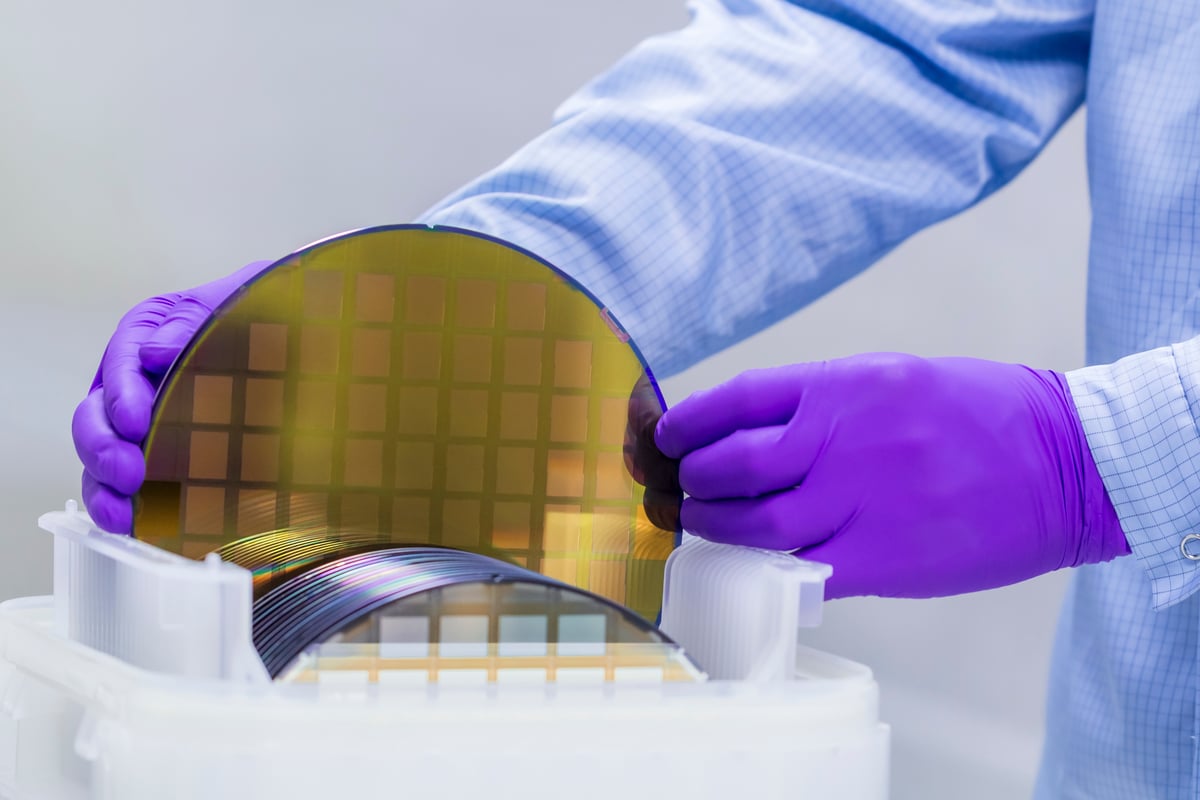

Image source: NVIDIA.

NVIDIA has a vested interest in blazing new trails in AI and HPC. For the second quarter, the company's data-center segment -- which also includes processors used in cloud computing and data centers -- has become NVIDIA's biggest growth engine, generating revenue of $1.75 billion, up 167% year over year. More importantly, the data-center business surpassed the company's gaming segment and was responsible for 45% of revenue in the most recent quarter.

The tech giant's GPUs have become a staple in each of the world's largest cloud-computing operations. NVIDIA's relentless pace of innovation, as illustrated by these most recent advances, will help drive its growth for years and decades to come.