What happened

Down much of last month, down again Monday, but up Tuesday -- Facebook (FB 0.68%) stock took its latest turn for the worse Wednesday morning as its shares slipped 1.9% through 10:05 a.m. EDT.

What caused today's decline? Several things, actually.

Image source: Getty Images.

So what

For one thing, Reuters reported last night that Facebook's six-hour-long network outage Monday caused a surge in interest in alternative platforms. Messaging app Telegram says it added more than 70 million accounts during the outage as WhatsApp and Facebook Messenger went down alongside the flagship social network. And yes, that's just a tiny fraction of Facebook's 3.5 billion users, and no, those folks didn't necessarily cancel their Facebook memberships and switch over to Telegram entirely -- but Facebook's fumble does seem to have given Telegram an opportunity to introduce itself to a lot of Facebook users and perhaps steal a bit of growth from the social media powerhouse.

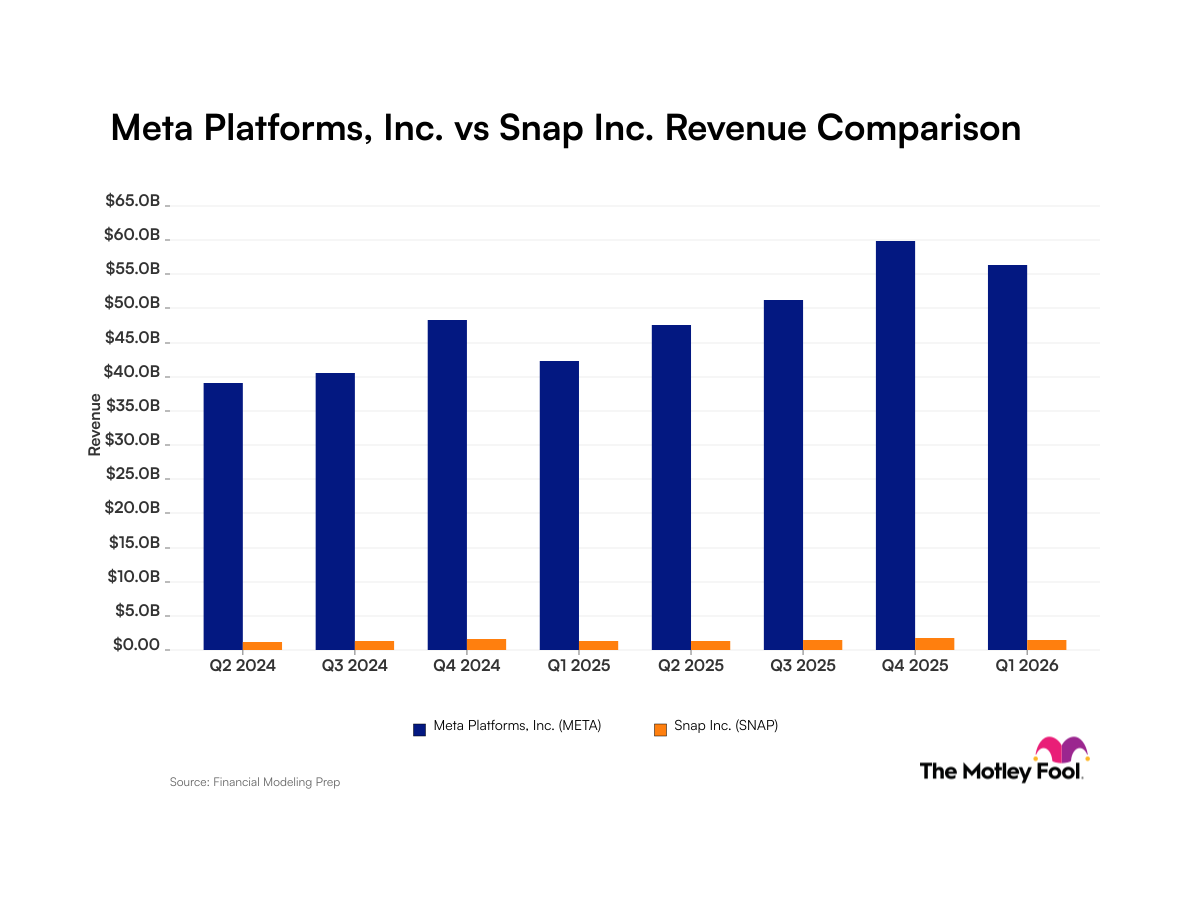

Telegram wasn't the only company to profit from Facebook's misfortune. As Bloomberg reports this morning, Facebook rival Snap (SNAP +3.17%) saw a 23% boost in time spent on its Android app as Facebook went dark on Monday. As you'll recall, Piper Sandler reported yesterday that Snap is already the favorite social media app among American teens -- and gaining market share. Facebook's Monday gaffe isn't going to do anything to change that trend.

Now what

If you ask me, though, the thing that may be worrying Facebook investors most today is CEO Mark Zuckerberg's recent blog post responding to both The Wall Street Journal's reporting on Facebook, and whistleblower Frances Haugen's testimony before Congress.

In roughly 1,300 words, Zuckerberg defended his company's efforts to promote the "safety, well-being and mental health" of Facebook users, and argued that the accusations that have been leveled against Facebook "don't make any sense":

If we wanted to ignore research, why would we create an industry-leading research program to understand these important issues in the first place? If we didn't care about fighting harmful content, then why would we employ so many more people dedicated to this than any other company in our space -- even ones larger than us? If we wanted to hide our results, why would we have established an industry-leading standard for transparency and reporting on what we're doing? And if social media were as responsible for polarizing society as some people claim, then why are we seeing polarization increase in the US while it stays flat or declines in many countries with just as heavy use of social media around the world?

Of course, no one -- not even the Journal -- is accusing Facebook of "ignoring" research, harmful content, or being solely responsible for the polarization of American society. The accusations are more that Facebook's honest attempts to fix the problems sometimes ended up making them worse. And that even when research revealed a possible fix, but that fix created the risk of weakening user engagement (and endangering Facebook's profits), the profit motive won.

Either way, these problems persist -- and until they get fixed, the risk that Congress will move to tighten regulatory oversight of Facebook, whatever the cost to Facebook's growth prospects and profits, remains elevated.